Our weekly Briefing is free, but you should upgrade to access all of our reporting, resources, and a monthly workshop.

This week on Indicator

Craig revealed that AI-generated influencers on Instagram posted fake photos with celebrities like The Rock and Cristiano Ronaldo build a following — and then funnel people to a Fanvue page selling synthetic explicit content. Meta removed at least a dozen accounts as a result of our reporting.

He was also quoted in The Verge’s story, “How the experts figure out what’s real in the age of deepfakes.”

We dropped our latest episode of Show & Tell! Clara Jiménez Cruz, co-founder and CEO of Maldita.es, a Spanish nonprofit news organization, gave a hands-on look at three investigations that exposed how platform systems enable scams, monetized deception, and child exploitation.

Watch and subscribe on YouTube or on Spotify and Apple Podcasts. Also, scroll down to the Tools & Tips section of this newsletter to learn how the episode led to a new feature in a great set of free OSINT tools!

X decides to fight (some) disinformation. Will it work?

On March 3, X did something unusual. It behaved like a normal platform.

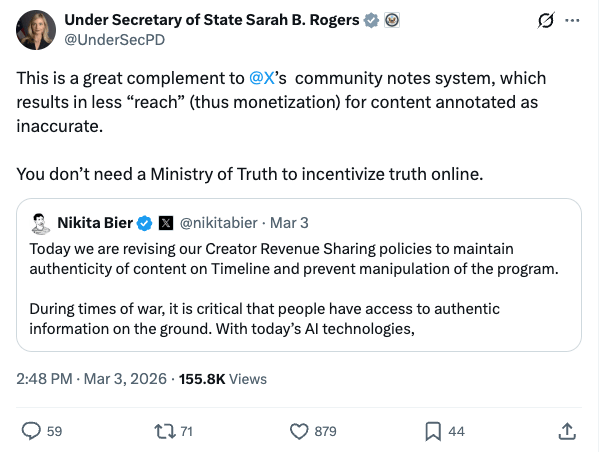

Faced with a rising tide of misinformation about the US-Israel conflict with Iran, head of product Nikita Bier announced that X will suspend payouts to creators “who post AI-generated videos of an armed conflict—without adding a disclosure that it was made with AI.”

Even more striking was the reaction from Sarah Rogers, a high-ranking official in Donald Trump’s State Department who played a role in the move to restricting US travel for five people who research disinformation or help regulate digital platforms. Rogers called X’s new policy “a great complement to @X’s community notes system, which results in less ‘reach’ (thus monetization) for content annotated as inaccurate.”

The hypocrisy behind the declarations is bewildering. Elon Musk and the Trump administration have repeatedly attacked trust and safety and disinformation interventions as tools of censorship.

But let’s set the about-faces aside to assess X’s countermeasure on its merits.

Bier wrote that X will identify AI-generated fakes using Community Notes or by detecting metadata that identifies the content as synthetic.

These are sensible but imperfect signals. Indicator’s October audit of AI labels found that metadata is unevenly applied to AI-generated content and inconsistently detected by platforms.

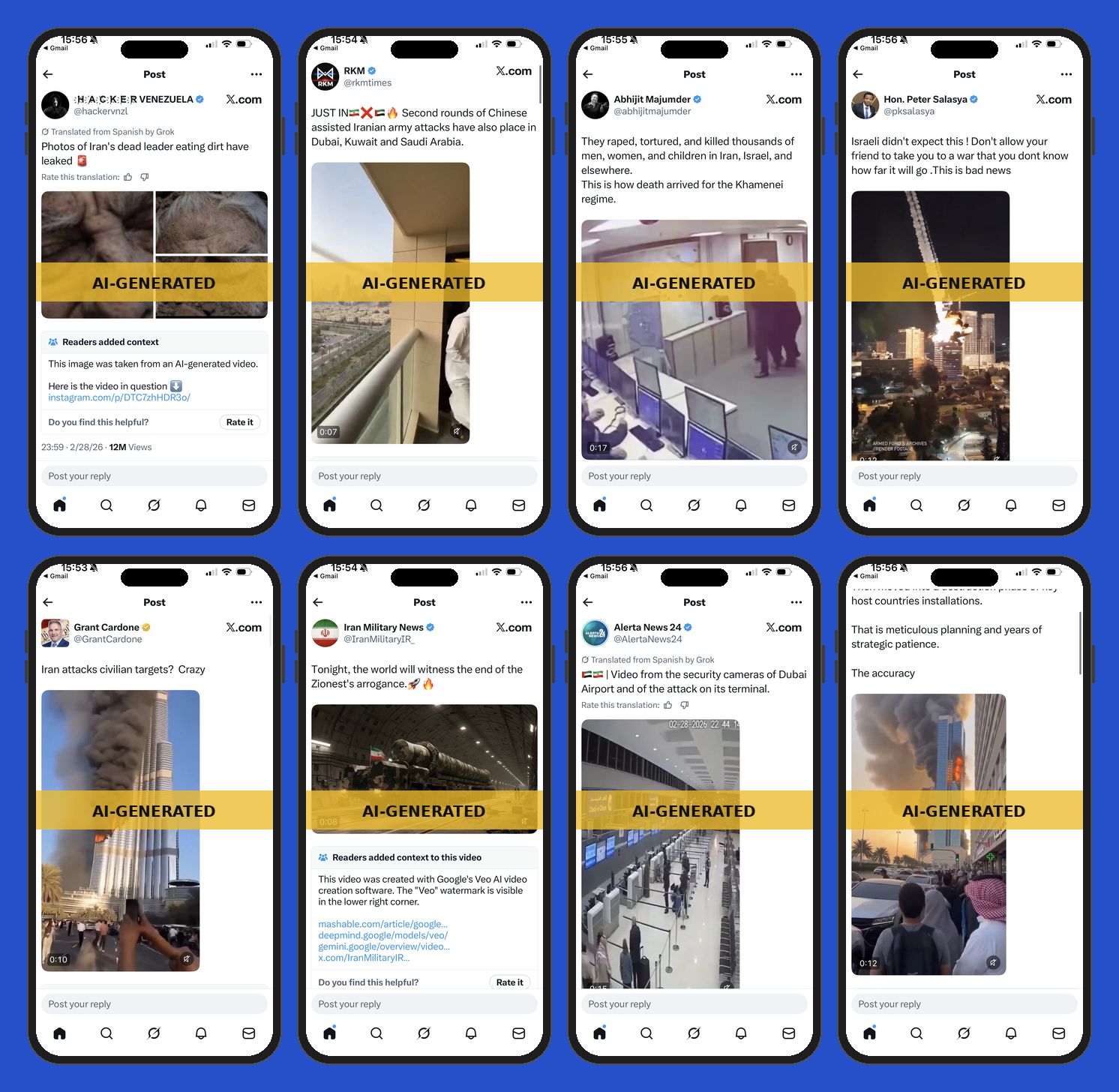

Using Community Notes to enforce is a more interesting approach, and one that’s unique to X. Looking at notes published by Monday night (the data is released with a lag), I found 11 tweets with a “helpful” note that flagged the tweets for unlabeled or misleading AI-generated multimedia about the Iran war. The tweets had over 36 million views and included deepfake videos of destruction in Dubai and synthetic images of Ayatollah Khamenei’s corpse.

There is an obvious limitation here. The Community Notes bridging algorithm requires that people who previously disagreed see eye-to-eye on a note before it’s deemed “helpful” and shown on X. It means that a lot of accurate notes never reach “helpful” status.

The consequences are evident in the data I reviewed. I found at least 38 proposed notes for tweets that contained unlabeled AI videos or images about the war, and which didn’t have enough ratings to be displayed on X. Presumably, the platform won’t use such notes to penalize creators. That means a lot of unlabeled and deceptive AI-generated war content will continue to earn money.

Sticking with our sample, the tweets that earned the 38 proposed notes collectively had 47 million views — more than the tweets with helpful notes. That’s a lot of potentially monetized falsehoods.

It’s also noteworthy that of the 49 notes in my sample, 21 used a fact-checking website as a source. This once again shows that dedicated journalistic debunking operations are an essential part of a crowdsourced fact-checking effort.

To be clear, X’s new policy is a step in the right direction. But the platform has more work to do to dissuade its users from spreading false information.

For one, not all disinformation is a deepfake. Sticking just to multimedia, viral hoaxes about the war have leveraged video game footage and out of context videos.

More fundamentally, X’s engagement model has turbocharged the incentive to post sensationalist content that’s endemic to most algorithmically-driven platforms. Users have to pay for X Premium in order to receive additional visibility in the timeline. Once inside the blue-check program, they can earn payouts based on the amount of engagement with their content.

All of the accounts in my sample that received a Community Note for sharing misleading war footage had a blue tick. That means they were eligible to earn money for their content. One of them, businessman Grant Cardone, has neither deleted nor apologized for sharing an AI-generated video of the Burj Khalifa on fire. He even ignored Bier’s invitation to label the content as AI. Perhaps that’s why we got this new policy. — Alexios

Read more about digital deception and the US-Israeli strikes on Iran:

Hackers hit Iranian apps, websites after US-Israeli strikes (Reuters)

Google’s “AI Overviews” Supercharge Iran Hoaxes (NewsGuard)

Misinformation, AI images related to Iran conflict spreading online (CTV News)

How AI fakes are turning satellite images into war misinformation (Financial Times)

Iran, Pakistan, Kabul? Grok Maps 3 Different 'Facts' For The Same Viral Video (BOOM)

A redesigned and updated Academic Library!

Indicator serves researchers who study digital deception, journalists and investigators that expose it, and tech workers who build interventions to combat it.

One way we try to bring our three audiences together is through our Academic Library, a curated digest of academic studies and industry reports about digital deception. It helps journalists, tech workers, and researchers ground their work in evidence and stay on top of this emerging field.

We recently redesigned the Library and updated it to include 75 papers and industry reports. Each entry has a brief blurb with a key takeaway. We do our best to highlight the actionable findings in the most interesting reports.

The Library covers the prevalence and effects of disinformation and AI-generated harmful content, and details notable research about countermeasures. You’ll find studies about Community Notes, fact-checking, synthetic nudes, platform manipulation, and more.

Check it out and let us know what you think at [email protected].

Deception in the News

📍 In more X news, the company announced it was rolling out a “Paid partnership” label for creators to use when posting sponsored content. “While we want to encourage people to build their businesses on X, undisclosed promotions hurt the integrity of the product and lead people to distrust the content they read on X,” tweeted Nikita Bier, the platform’s head of product. X lags behind other platforms in offering such a label. And our reporting has shown that individual creators and industrial-sized UGC campaigns often actively avoid labeling their content, for fear of ruining the “organic” nature of the campaign. But as with X’s new policy about unlabeled AI-generated war content, it’s better late than never. – Craig

📍 Meta sued four advertisers that impersonated celebrities and brands to scam people. The advertisers were based in Brazil, China, and Vietnam.

📍 Vietnam’s AI law requiring clear labels on photorealistic AI content went into effect on March 1.

📍 A bipartisan bill introduced in the US Senate would create a new offense under the Communications Act “to prohibit falsely posing as a real or imaginary individual through a highly realistic digital impersonation with the intent to defraud a person of money or other things of value.”

📍 404 Media reports that scammers are trying to phish clients of email marketing platforms by targeting them with an email that claims all their emails will include a “Support ICE” footer. The malicious actors aimed to collect the usernames and passwords of clients that to deactivate the footer.

Tools & Tips

This was a notable week for tool launches and updates. Here’s a rundown of three key tools/toolsets to know about, plus a few more items of note. — Craig

1. Dark Light Viewer

Benjamin Strick launched Dark Light Viewer, “a new free open source tool that lets anyone track how cities, conflict zones, developments and communities change at night over time.”

The core idea is that a notable event or issue in a specific area may be accompanied by a change in the amount of nighttime light. Strick’s tool uses Google Earth Engine, satellite imagery, and data from a sensor called VIIRS (Visible Infrared Imaging Radiometer Suite) that captures precise measurements of light intensity. The tool makes it easy to calculate and visualize increases or deceases in the amount and intensity of night time light. You can also compare the most recent night time light capture with data from one month, one year, 5 years, 10 years ago.

The tool includes this description:

“Every night, satellites record how much artificial light reaches space from every point on Earth. This tool compares those readings across time, showing where light has increased or decreased — and by how much. It can't tell you why. But it can tell you something changed.”

Strick wrote a detailed and helpful overview of Dark Light Viewer that includes case studies to show how it can be useful to investigators. He offered a list of scenarios where it can be applied. Here are two of them:

Investigative journalism and OSINT: If a government says a city is functioning normally but nighttime light has dropped 70%, that gap is a story. Useful for reporters verifying claims in areas they cannot physically reach.

Economic and development research: Track post-disaster economic recovery, measure whether aid investment is producing visible activity, or cross-check official GDP figures in countries where government statistics are unreliable.

The code is open source and is available here.

2. Forensic OSINT’s free toolkit

The folks behind the Forensic OSINT web capture tool launched a page with six free, browser-based tools.

IP Lookup (VPN/proxy detection + court-ready PDF)

Username Search

Domain-to-IP with security scoring

Email Header Analyzer

Image EXIF Reader

Timestamp Decoder

You can run more searches if you register for free. Ritu Gill, a respected member of the OSINT community and a cofounder of Forensic OSINT, said that they do not track or store any search data.

⮕If you want to learn more about Forensic OSINT and other web capture tools (free and paid), check out the Indicator Guide to tools for capturing web pages and social media content.

3. New and updated My OSINT Training bookmarklets

Our friends at MOT got inspired by the latest episode of Show & Tell and added an awesome feature to their free OSINT bookmarklets. (Why are bookmarklets so useful for for digital investigations? Read my recent guide to using them.)

During the show, Clara showed a spreadsheet that her team created to collect data about TikTok accounts. We commiserated about how we have to manually paste the profile info extracted by a MOT bookmarklet (such as the user ID, account creation date, etc) into a Google Sheet.

Well, Micah Hoffman from MOT saw the episode and added an "Export to Excel" button to more than 20 of their bookmarklets! Now you can press a button to copy the account info and instantly paste it into a spreadsheet. See the above image to get a sense of what it looks like.

📍 Dennis Keefe did an interesting write up of how to use unclaimed property sites like missingmoney.com to assist with digital investigations. He explains how you can identify an address associated with an individual, search for information about a company, and look for beneficiaries and co-owners associated with unclaimed money.

📍 The Open Source Munitions Portal launched a new collection of munition images related to the war in Iran. It includes “all images of munitions in the OSMP archive used by Israel, the United States, and Iran during the 2026 Iran war. It includes munition debris found in Iran, Israel, and multiple other states in the Middle East.”

📍 Hanan Zaffar and the Global investigative Journalism Network published, “Using Satellite Imagery to Investigate War Crimes.”

📍 The latest OSINTIRL video is, “OSINT Investigators: Stop Trusting Tools Without Vetting.”

📍 Geolocation savant Rainbolt did a quick, instructive video about how to pull information from the bar code displayed on an airline boarding pass. Interesting info, and a good reminder about privacy.

Events & Learning

📍 The EU Disinfo Lab is hosting a free webinar on March 10, “DSA: Unfolding the European Commission’s first decision against X.” Info and registration here.

📍 OSINT Industries is giving a free webinar on March 23, “Unmasking Iran’s Cyber Fronts: An OSINT Guide to IRGC Attribution.” Info and registration here.

📍 The International Consortium of Investigative Journalists and OpenCorporates are hosting a free webinar on March 26, “The Modern Money Trail.” Info and registration here.

📍 Indicator will host its first in-person event at the International Journalism Festival in Perugia, Italy on April 16. We’ll be sharing more details soon but if you’re a paid member and will be in Perugia, let us know so we can invite you for food and drinks. If you’re attending IJF, why not become a member so you can attend?

Reports & Research

📍 A group of researchers described in a preprint how an LLM agent can be used to identify anonymized accounts with high precision (but lower recall). They used Hacker News comments and Anthropic Interviewer dataset as case studies. They wrote that “the practical obscurity protecting pseudonymous users online no longer holds and that threat models for online privacy need to be reconsidered.” (I’m not fully sold on this conclusion based on the findings of the paper alone. But it’s clear that mass deanonymization is a legitimate concern due to the cost, speed, and scale at which LLM agents can operate. — Alexios)

📍 The European Fact-Checking Standards Network published a white paper describing and denouncing the rollback of tech companies’ commitments to information quality. It pairs well with this FT report on the transatlantic battle over content moderation.

📍 Conspirador Norteño wrote about 20 US-themed Facebook pages that are managed by accounts in countries like Sri Lanka, Pakistan, Finland, Bangladesh, Romania, etc.

📍 A network of over 200 AI slop websites were part of an operation to siphon ad revenue away from legitimate sites, according to a new report from DoubleVerify, a digital ad tech company. “Viewed individually, each domain appears to be an independent lifestyle blog,” the company said. “But they all have the same AI-generated articles and images and are optimized for ad delivery, not user experience.” The researchers found that the sites’ JavaScript code mistakenly included some of the prompts used to create the content.

One More Thing

The Norwegian Consumer Council published a hilarious and effective PSA called “A Day in the Life of an Ensh*ttificator.” It concludes with these lines: “It’s not just your imagination. Digital services are getting worse. Luckily, it doesn’t have to be this way.” You should watch it:

One of the most liked comments is perfection:

Indicator is a reader-funded publication.

Please upgrade to access to all of our content, including our how-to guides and Academic Library, and to our live monthly workshops.