Our weekly Briefing is free, but you should upgrade to access all of our reporting, resources, and a monthly workshop. If you’re already a member, we thank you for your support!

This week on Indicator

Alexios spoke to Glen Kessler, aka The Washington Post’s Fact Checker, about his decision to accept a buyout and how fact-checking has evolved since 2011. Alexios also continues to despair that TikTok is unwilling to take down celebrity giveaway scam videos that he wrote about in July.

Craig gave an inside look at a digital investigative experiment that helped prove a key point in a major lawsuit against Meta.

Craig will host our second members-only workshop today at 12 pm ET. He’ll cover some of the tools and techniques outlined in The Indicator guide to connecting websites together using OSINT tools and methods. If you upgrade now you can join and get access to the video, slides, and transcript. Existing members can reply to this email to receive the link for today’s session.

Deception in the News

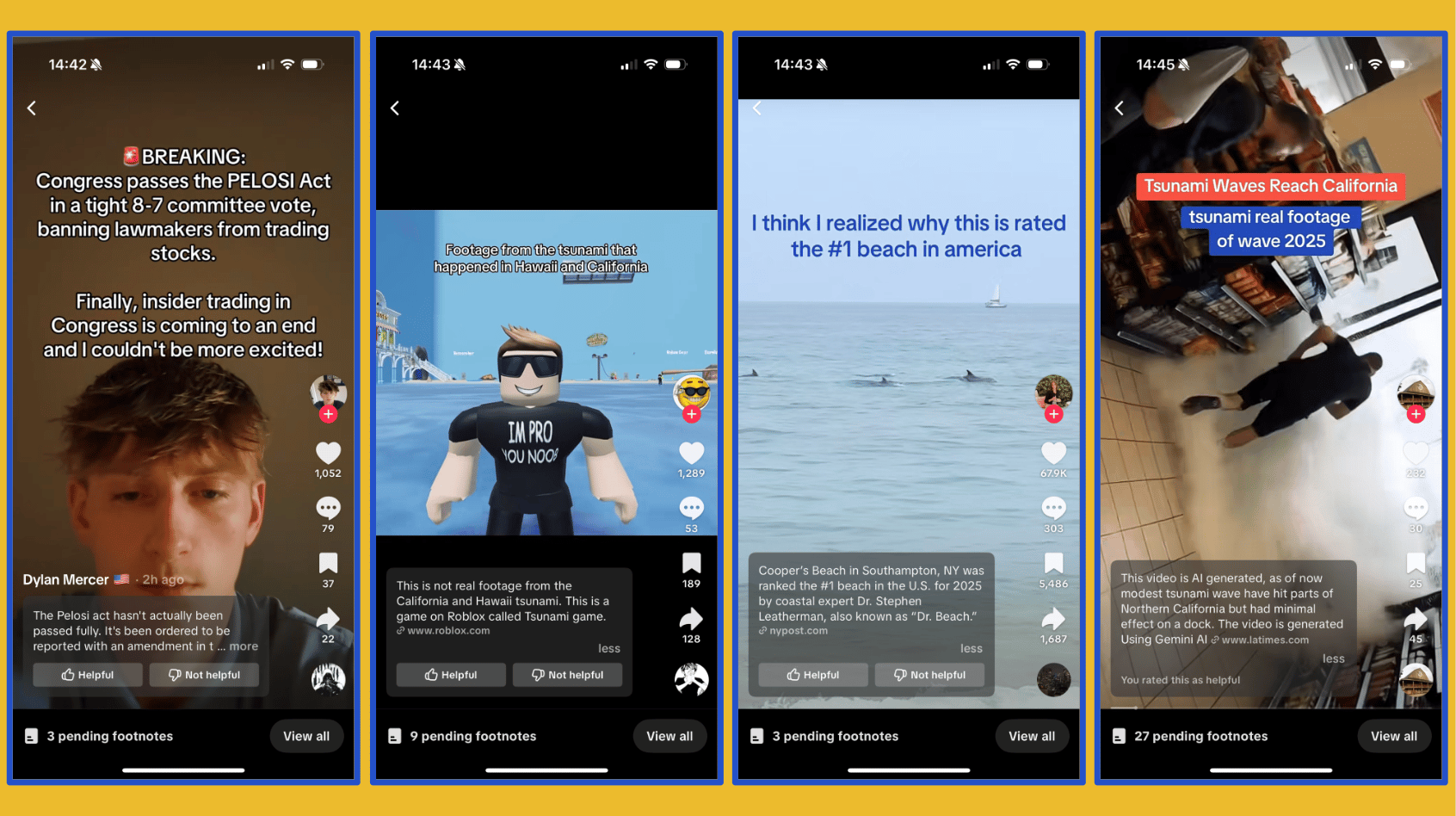

📍 TikTok’s crowdsourced moderation feature, Footnotes, is now live in the United States, with 80,000 contributors in the pilot program. So far, the Notes applied in the program are a mixed bunch.

📍 Grok and Google’s AI Overviews repeatedly got their facts wrong about tsunami warnings issued for America’s Pacific Coast.

📍 A Reddit user claims that a ChatGPT Agent successfully confirmed its humanity in a CAPTCHA on a video conversion website.

📍 Aos Fatos found 30 TikTok profiles spreading AI-generated misinformation about US-Brazil relations to millions of users amid an escalating trade war. The platform deleted 26 profiles after being contacted by the fact-checkers.

📍 The BBC revealed that a Russian-linked disinformation campaign cloned the voices of pubic sector workers, including an emergency medical advisor, and used them in an influence campaign.

📍 The US House Judiciary Committee asked Spotify to provide more information about its COVID-19 misinformation policy that led to Joe Rogan getting “censored,” including any exchanges with European Union officials. The committee’s letter approvingly quotes Mark Zuckerberg’s infamous “More Speech, Fewer Mistakes” speech from January. Legal scholar Daphne Keller pointed out that while she shares concerns over the EU’s Digital Services Act, the Judiciary Committee’s latest report was unable to find evidence of censorship.

📍 UK users are reportedly circumventing age verification checks introduced on porn sites as a result of the Online Safety Act. They’re using profile pictures created with ChatGPT or taken from video games.

Tools & Tips

📍 Cyber Detective noticed that DuckDuckGo, the privacy-enhanced search engine, has a new option to hide AI generated images from image search results. A quick test with a search for “lebron pregnant” showed that it removed only one of two obvious AI images in the top row of results. The tool cautions users that its “Block list is not exhaustive and may contain inaccuracies.”

📍 Impersonal.me allows you to “make Google searches as if you were searching from different countries and in different languages.” (via Mario Santella)

📍 DeepFind.Me offers a collection of OSINT tools and resources. (via The OSINT Newsletter)

📍 Filmot is a tool that allows you to search within YouTube subtitles, captions and transcripts. It says it indexes “over 1.33 billion transcripts across 1.18 billion videos and 72 million channels.” (via Cyber Detective)

📍 The Drone Database is a resource from the Center for a New American Security that lists information about drones from around the world. (via OSINTech)

Events & Learning

📍 I-intelligence is offering a free two-hour webinar about how to do online research using foreign languages such as Russian, Chinese, and Arabic. (Craig previously joined this free webinar and really enjoyed it.) Go here for info on how to register.

📍 The Journalist’s Resource is hosting a webinar, “Rebuilding trust in health reporting while covering misinformation” on August 19. Register here.

Reports & Research

📍 A large-scale experiment involving 12,500 participants conducted by researchers at Microsoft AI found that humans are on average depressingly bad at spotting AI-generated images. Respondents had a 62% success rate, which is not much better than a random guess. The experiment asked people to rate 15 images randomly selected from a sample of 350 “real” images and 700 fake faces contained in the Real Or Not? quiz.

📍 CCDH found that the reach of 44 misleading tweets about the January fires in Los Angeles by Alex Jones absolutely dwarfed the views received by a sample of news articles and posts from emergency responders.

📍 Two interesting studies out this month in the HKS Misinformation Review. In the first, researchers used NewsGuard’s ratings to detect a decline in the average reliability of domains shared on X before and after Elon Musk’s acquisition. In the second, corrections posted by other users were found to have a small and often insignificant effect on the perceived accuracy of false Facebook posts in Brazil, India, and the UK.

Want more studies on digital deception? Paid subscribers get access to our Academic Library with 55 categorized and summarized studies:

One Thing

There are lots of sites that use AI to scrape and rewrite articles from big news publishers. Clara Murray of the Financial Times shared what happened when one such site hit the FT paywall on her story about AI and the workforce:

Indicator is a reader-funded publication.

Please upgrade to access to all of our content, including our how-to guides and Academic Library, and our live monthly workshops.