Our weekly Briefing is free, but you should upgrade to access all of our reporting, resources, and a monthly workshop.

This week on Indicator

📍Craig analyzed 38 “situation monitoring” dashboards to determine which can actually be useful for investigators, journalists, and researchers. Learn about open-source options and view a detailed chart that breaks down their features.

📍 We published the video, transcript, slides, and AI-generated notes from our most recent monthly workshop. Craig demoed new tools he discovered while writing The Indicator Guide to investigating ecommerce sites. He also showed how to make a simulated credit card purchase at an online store in order to gather merchant/payment details.

📍We’re thrilled to share that Indicator was shortlisted for the inaugural Canadian Journalism Foundation Hinton Award for Excellence in AI Safety Reporting. The award recognizes “exceptional journalism that critically examines the safety implications of AI.” The nomination is for our work exposing the scourge of abusive AI nudifiers.

Impact report

Tracking Indicator’s impact isn’t straightforward. An investigation might lead to changes inside an institution that never get reported. A workshop could enable someone to conduct an analysis or make connections that have tangible but confidential ramifications. But one thing we can track is the action that platforms take as a result of our reporting. We collect examples on our About page and will be sharing quarterly-ish reports with you. Here’s a few highlights from the first three months of 2026:

Streaming services largely adopted a “Scout’s honor” system for AI labeling — and it shows

The Bandcamp profile of Valkyrae described it as a power metal project whose music is “written by Sal and composed by Suno AI.”

Bandcamp announced in January that it was “putting human creativity first” and banning all AI music from the platform. A couple of months later, Valkyrae’s AI-generated albums were still being streamed.

“Hell, Bandcamp has officially banned all AI music but guess what… my album is still up there for sale,” said the man behind Valkyrae in a recent post to an AI music Discord channel. (Emphasis his.)

Valkyrae is the artist name used by Sal Thomas of Chicago. In an interview with Indicator, he said he created the music in Suno and used a Digital Audio Workstation (DAW) to mix and master songs.

“As for the moral debate? Not my concern,” he said.

In response to the onslaught of AI-generated content, seven major music streaming services have implemented rules for synthetic music. The policies range from total prohibition to permissive self-disclosure. Platforms like Spotify, YouTube Music, and Soundcloud favor a “Scout’s honor” system, requiring uploaders to self-disclose AI use. Many of the policies are new — Apple Music updated its rules last month — and enforcement appears to be light-to-nonexistent. Users also have no way to opt out of AI-generated content recommendations.

The divide between Bandcamp and its peers is illustrated by recent comments from company leaders. Bandcamp general manager Dan Melnick recently called prompt-based music "meaningless" and the company said it has “the right to remove any music on suspicion of being AI-generated.” SoundCloud CEO Elijah Seton compared the ability for anyone to create AI music to how mobile phones made photography more accessible to the masses.

As with AI-generated images and videos, there isn't a foolproof way to detect synthetic music. Spotify said it’s developing an approach that uses AI to analyze song structure and audio signals, rather than relying on user-reported metadata.

Indicator reviewed the AI policies of seven major streaming services and produced a chart that compares each platform’s approach. We also joined multiple Discord servers and subreddits dedicated to AI music and passive-income schemes, to document how people are circumventing the policies.

Platform | Policy announced | How are labels applied? | Label location | User agency |

|---|---|---|---|---|

Apple Music | March 2026 | Manually by the uploader | On the “Now playing” interface | No opting out of AI music |

Bandcamp | AI is banned | AI is banned | AI is banned | AI is banned |

Deezer | January 2025 | Automated tool, platform-developed | On the “Now playing” interface | No opting out of AI music |

Soundcloud | May 2025 | Manually by the uploader | Credits and metadata | No opting out of AI music |

Tidal | March 2026 | No tools, relies on user reports of copyright/trademark infringements | Credits and metadata | No opting out of AI music |

YouTube Music | July 2025 | Manually by the uploader and via automated metadata analysis | Located on artist bio and the “Now Playing” interface | No opting out of AI music |

Indicator found that despite platform promises to enforce disclosure of synthetic music and artists, AI music makers and digital schemers can easily circumvent the rules by omitting self-disclosure.

“Just do it. I doubt if anyone would come looking for you,” wrote a Facebook user named “Dad Tha Puppet” in an AI music Facebook group. They were replying to a person who raised concerns about the legal repercussions of uploading AI music without disclosure.

Sal Thomas, aka Valkyrae, was active in a Discord channel where users discussed strategies to monetize AI-generated content on Bandcamp. As of now, it seems fairly easy to do. Bandcamp’s enforcement systems failed to catch that Valkyrae’s bio said his music was created with Suno. Indicator also submitted five reports via the platform's reporting tool to flag that the profile violated the platform’s AI policy. Bandcamp removed the profile only after Indicator reached out for comment. (The company did not respond.)

Other platforms like Spotify and YouTube Music also failed to take action based on user-submitted reports, albeit with a small sample. It’s also worth noting that none of the streaming platforms list AI content as a category in the reporting flow. Users have to select trademark or copyright infringement or describe the issue in the “Notes” section of the report form.

For now, a lot of AI-generated music will be unlabeled and undetected by platforms — and users will be tuning into synthetic music, whether they know it or not. — Nasha Dutta

Deception in the News

📍 A medical researcher published two preposterously fake preprints about a made-up eye condition called Bixonimania in order to show how AI search tools can regurgitate unreliable information. Perhaps even more worrying is that the clearly fake research was also cited in peer-reviewed literature.

📍 The U.S. Attorney's Office for the Southern District of Ohio says it’s the first agency to convict someone under the Take It Down Act, which criminalizes nonconsensual deepfake nudes. The defendant's actions were truly vile — details are in the DOJ press release.

📍 The New York Times received a lot of pushback for its article about a two-person company called Medvi that will allegedly reach $1.8 billion in annual sales thanks to clever use of AI. As Futurism has reported, the company’s use of AI includes ads with synthetic doctors and webpages with fake customer testimonials. On Thursday, The Times added an Editor’s Note to the story flagging that Medvi is facing legal and regulatory challenges.

📍 The FBI says Americans lost $798 million to government impersonation scams in 2025. The number of cases almost doubled compared to the previous year. AI-related internet crimes accounted for more than $893 million in reported losses.

📍 A lawsuit filed in California alleges that Perplexity AI’s incognito mode is a “sham” and that search data is instead shared with third parties.

📍 India’s government is reportedly looking to regulate the placement of X Community Notes on content that deals with news and public affairs. The move would allow the government to “seek removal of content that corrects official claims,” according to the Hindustan Times.

Tools & Tips

When a person clicks the “copy link” button on an online post, the resulting share link often contains metadata. Depending on the platform, it might reveal the username of the person that generated the link, their country, and when the link was created, among other information.

Soxoj, a developer of useful OSINT tools like Maigret, released an open-source tool that extracts data encoded from a shared link. Called Sharetrace, it works with links generated via ChatGPT, Claude, Discord, Instagram, Microsoft SharePoint/OneDrive, Perplexity, Pinterest, Substack, Suno, Telegram, and TikTok.

I installed Sharetrace and generated a share link from an Instagram Reel. The above graphic shows the share URL and what I extracted using Sharetrace.

Two things to note: the info you get varies by platform, and Sharetrace only works with share links generated from the mobile versions of Instagram and TikTok. Other platforms work with web and mobile. — Craig

📍 Google recently announced the ability for people to change their Gmail address without having to create a new account. That’s good news for folks who picked an address like [email protected] and want to transition to a more professional email without having to start over. But the change may make things a bit more difficult for investigators. The good news is that the person’s Google ID remains the same, which is critical for pulling data about their Google reviews. But investigators should note a few changes. 1v0t wrote an overview of what does and doesn’t change as a result. (Note: I haven’t tested this for myself.)

📍 Henk van Ess launched Fact-Check Database, which enables you to search through “debunked images from Reuters, Snopes, PolitiFact, AFP, BOOM Live, Lead Stories, Full Fact and 100+ fact-checkers worldwide.” More info here.

📍 My OSINT Training added the Snapchat Bitmoji Historical Avatar Viewer to its list of useful OSINT bookmarklets. (Read our guide to bookmarklets.) The bookmarklet enables you to view historical Bitmoji avatars associated with a Snapchat account. MOT also updated the de-blur bookmarklet, which can remove the blurring effect from content on some sites.

📍 Bellingcat released the Iran Conflict Damage Proxy Map, an open-source tool that uses “a statistical test on Synthetic Aperture Radar (SAR) imagery captured by the Sentinel-1 satellite” to assess conflict damage across Iran.

📍 PropagandaScope is a free website that aggregates content from Chinese state media, including 20 Chinese provincial party newspapers and the People's Daily.

📍 Benjamin Strick published the latest edition of his Field Notes newsletter. It looked at “fitness trackers, shadow fleet, satellite blackouts and how to investigate with Strava.”

📍 Toddington International wrote, “The New Era of Digital Photos: How AI Is Reshaping Visual Evidence.”

📍 OutX offers a free tool that lets you view a LinkedIn profile without being logged in or on LinkedIn’s website. (Via Cyber Detective.) The results are very limited. But even more helpful is the company’s chart that breaks down the profile information that you typically can and can’t see, depending on whether you’re logged in, logged out, or a Premium user:

Events & Learning

📍 Last call for our party in Perugia! Indicator’s first in-person event is next Thursday at the International Journalism Festival. We’re hosting an aperitivo with Fundación Maldita.es, Pagella Politica, and the Wikimedia Foundation. Details and link to RSVP are here. Attendance is reserved for members so if you’re attending IJF, why not become a member so you can join us?

Reports & Research

📍 Grants overtook Meta as the largest source of revenue for the world’s fact-checking organizations in 2025, according to the International Fact-Checking Network. Related: a new study looks at the frustrations of civil society leaders and researchers in the Global South with funders of anti-disinformation efforts.

📍 A NYT-commissioned analysis of Google’s AI Overviews found errors in about 10% of results.

📍 Maldita found 170 Meta verified accounts running scam ads using the likeness of athletes, celebrities, journalists and politicians. Some are impersonators, others are hacked accounts.

📍 A report by several disinformation experts commissioned by the EU's Joint Research Centre advocates, among other things, for a “progressive digital advertising tax” that would incentivize subscription-based platforms over engagement-driven ones.

📍 A study of deceptive networks that were active during the 2020 U.S. elections and subsequently blocked by Meta found that organic reshares by unaffiliated accounts were essential to help them reaching 37 million Facebook and 3 million Instagram users.

Want more studies on digital deception? Paid subscribers get access to our Academic Library with 75 categorized and summarized studies:

One More Thing

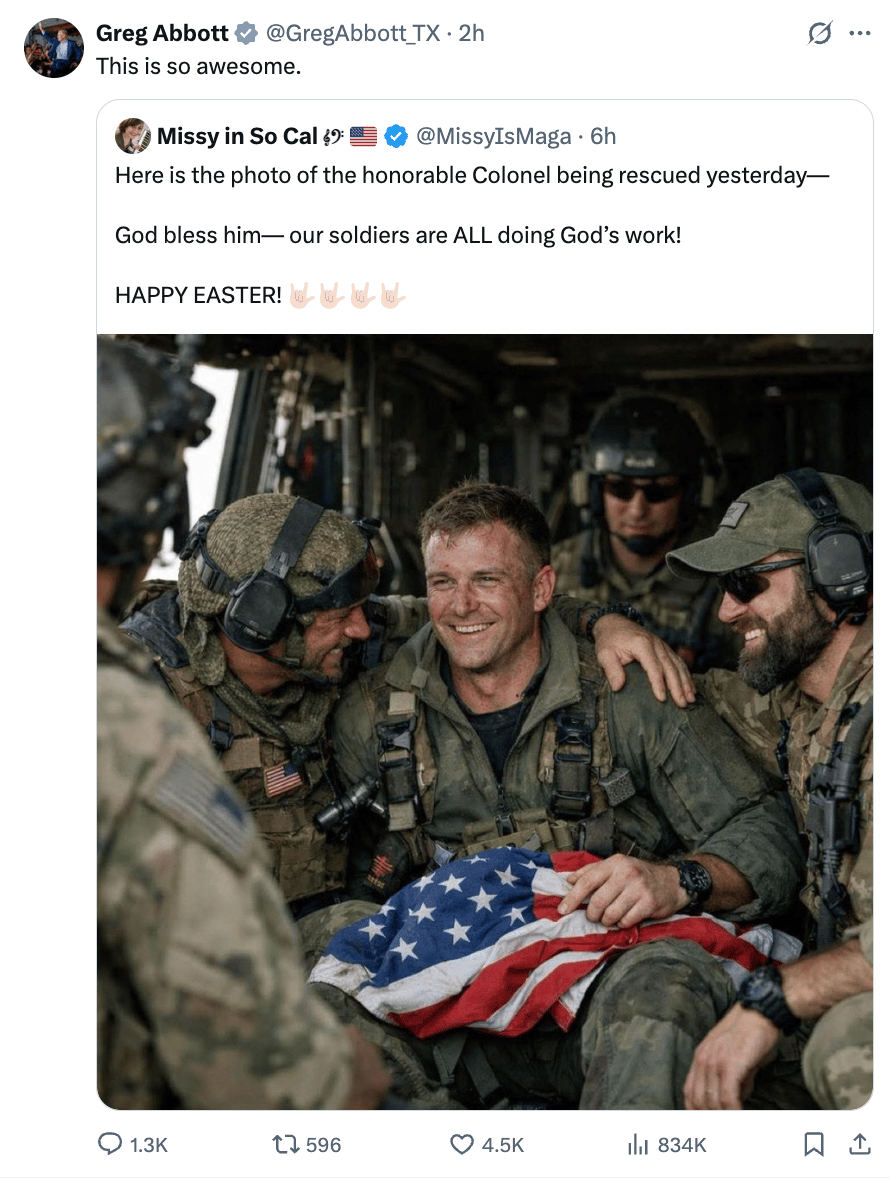

Oh nothing, just the governor of Texas falling for an AI-generated image that falsely claimed to show the Air Force weapons systems officer that was recently rescued from Iran:

The image carried a “Made with AI” label in the bottom left, not that the governor (or whoever was running his account) seemed to notice.

Indicator is a reader-funded publication.

Please upgrade to access to all of our content, including our how-to guides and Academic Library, and to our live monthly workshops.