Our weekly Briefing is free, but you should upgrade to access all of our reporting, resources, and a monthly workshop.

This Week on Indicator

Craig published an investigation into a real German doctor who hid behind a pseudonym to flood social media with AI-generated health slop — and selll books off the back of it. It was reported in partnership with NDR, the German public broadcaster, which also published a video.

Each day, tens of thousands of users ask Grok, X’s chatbot, to fact-check a tweet. So Alexios built a free public dashboard to display data about the tweets that generated the most English-language Grok fact-checking requests. Check it out! Using data from the tracker, he wrote about Grok’s reliance on mainstream sources and a successful effort to game Community Notes. (ICYMI this is our second free dashboard focused on misinformation on X; we also have one tracking AI-written contributions to Community Notes.)

We also announced the beta of OSINT Navigator, a new project in partnership with Tom Vaillant, a journalist and technologist, to help investigators find the right tool(s) for a specific task or need. Navigator draws on a database of almost 7,500 tools. Indicator members receive early access to Navigator, additional daily queries, and the ability to use MCP to pull data via their preferred AI platform. Read more here.

Instagram's AI thirst traps are speedrunning deception — and the grift just got deeper

Roughly two weeks ago I revealed how AI-generated Instagram influencers are using fake celebrity photos to funnel followers into paid 18+ content. In the time since, I found 10 additional accounts with nearly 3 million total followers running the same grift. And one of them revealed a new layer of deception I hadn't seen before.

Here’s @jessicaa.foster in her military uniform with President Donald Trump, Lionel Messi, and Cristiano Ronaldo:

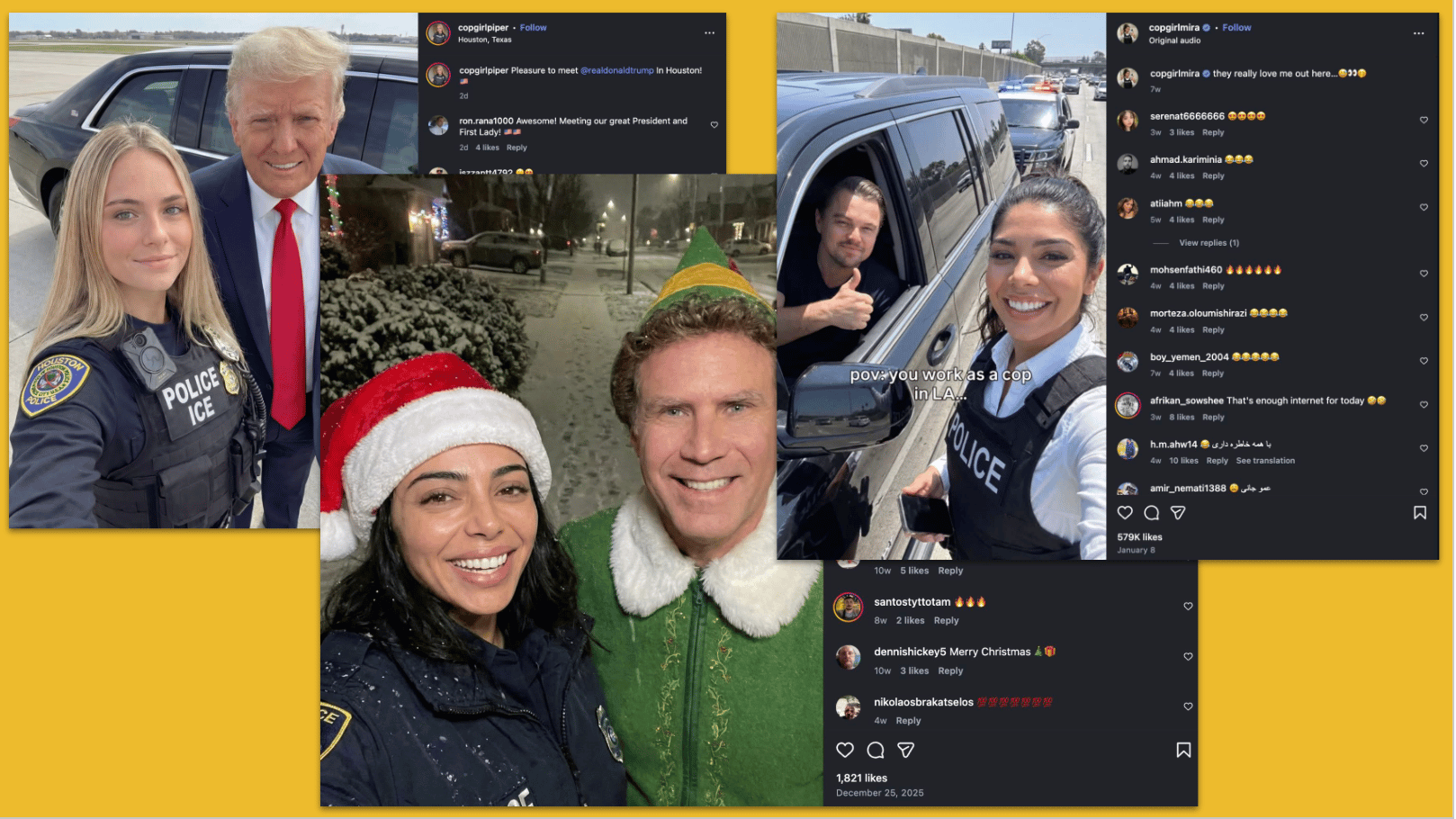

And @copgirlpiper and @copgirlmira with Will Ferrell, Leonardo DiCaprio, and Trump:

It’s a hustle that entices Instagram users with a sexy female avatar, spikes engagement with fake celebrity photos, and drives aroused followers to a monetized 18+ profile on Fanvue, which allows synthetic content. (Side note: many of the accounts post feet pics, an apparent lure for the highly engaged foot fetish community.)

None of the accounts disclosed that their personas and content are AI-generated.

When I reached out to Meta for my initial story, it removed the accounts that had posted photos with celebs. But it didn’t remove many of the other synthetic influencers that failed to label their content or account as AI-generated. I sent the 10 new celeb-bait accounts I found to the company. A spokesperson said they would review them. (All except one remain online as of this writing.)

Aside from showing how effective this hustle is on Instagram, the new set of profiles revealed how some accounts manage to operate on OnlyFans, which has stricter policies about AI-generated content than Fanvue, a competitor.

The fake soldier @jessicaa.foster has 1 million Instagram followers and an OnlyFans, a potent combination. I contacted OnlyFans to ask if it was aware that the account was using synthetic images. A spokesperson told me that the account belongs to an ID-verified creator. That means a real woman is apparently behind it. I subscribed for free to view Jessica’s OF photos and that’s when I discovered the next layer of the grift.

The images on Jessica’s OF appear to feature a real woman — but they hide her face. The strategy enables the OF creator to conceal her face and identity, while cashing in thanks to a synthetic, engagement-baiting public persona.

It’s a mind-bending ouroboros of deception and grifting. And the platforms seem incapable of, or unwilling to, stamp it out. What a time to be online. — Craig

Deception in the News

📍 Several friends of Indicator were among the people offered up for an “expert review” by AI editing platform Grammarly. (Craig was also in there, but I wasn’t — I’m not mad about it.) The authors didn’t provide their consent and were not thrilled about the inane advice they were credited with dispensing. Grammarly initially offered to remove any experts who opted out via email. It later discontinued the feature, perhaps because journalist Julia Angwin filed a class action lawsuit. — Alexios

📍 The EU is moving towards a complete ban on AI nudifiers. According to POLITICO, the proposal could become law over the summer.

📍 Meta is introducing new alerts inside its suite of apps that will warn users about common scam signals and tactics.

📍 Der Spiegel found that one of the agencies it licenses photos from was uploading AI-generated or altered images tied to the Iran war. The publication was one of multiple media outlets in Germany and the Netherlands that published the images.

📍 YouTube is expanding its likeness detection tool that scans for unauthorized AI replicas of people. It’s launching with “a pilot group of government officials, journalists, and political candidates.”

📍 Russian information operations continue to impersonate Western brands and politicians to spread disinformation. Recent targets include German media outlet Correctiv and Parisian mayoral candidate Pierre-Yves Bournazel.

📍A group of noncitizen content moderation and fact-checking experts living in the United States is suing the Trump administration over its stated policy to curtail visas for people involved in such work. The lawsuit was filed by the Knight First Amendment Institute at Columbia and the nonprofit Protect Democracy on behalf of the Coalition for Independent Technological Research. (Knight’s Jameel Jaffer wrote a little bit about the suit here.)

📍The Oversight Board released its decision on an AI-generated video about the Iran-Israel conflict that was published on Facebook in June 2025. The board found that Meta needs to do a lot more to detect and label high-risk synthetic content.

Your Ad Goes Here

Indicator reaches a highly engaged audience of more than 12,000 investigators, threat analysts, journalists, researchers, and trust and safety professionals. Contact us at [email protected] to discuss rates and opportunities.

Tools & Tips

A reminder that tools + a lack of expertise = flawed analysis

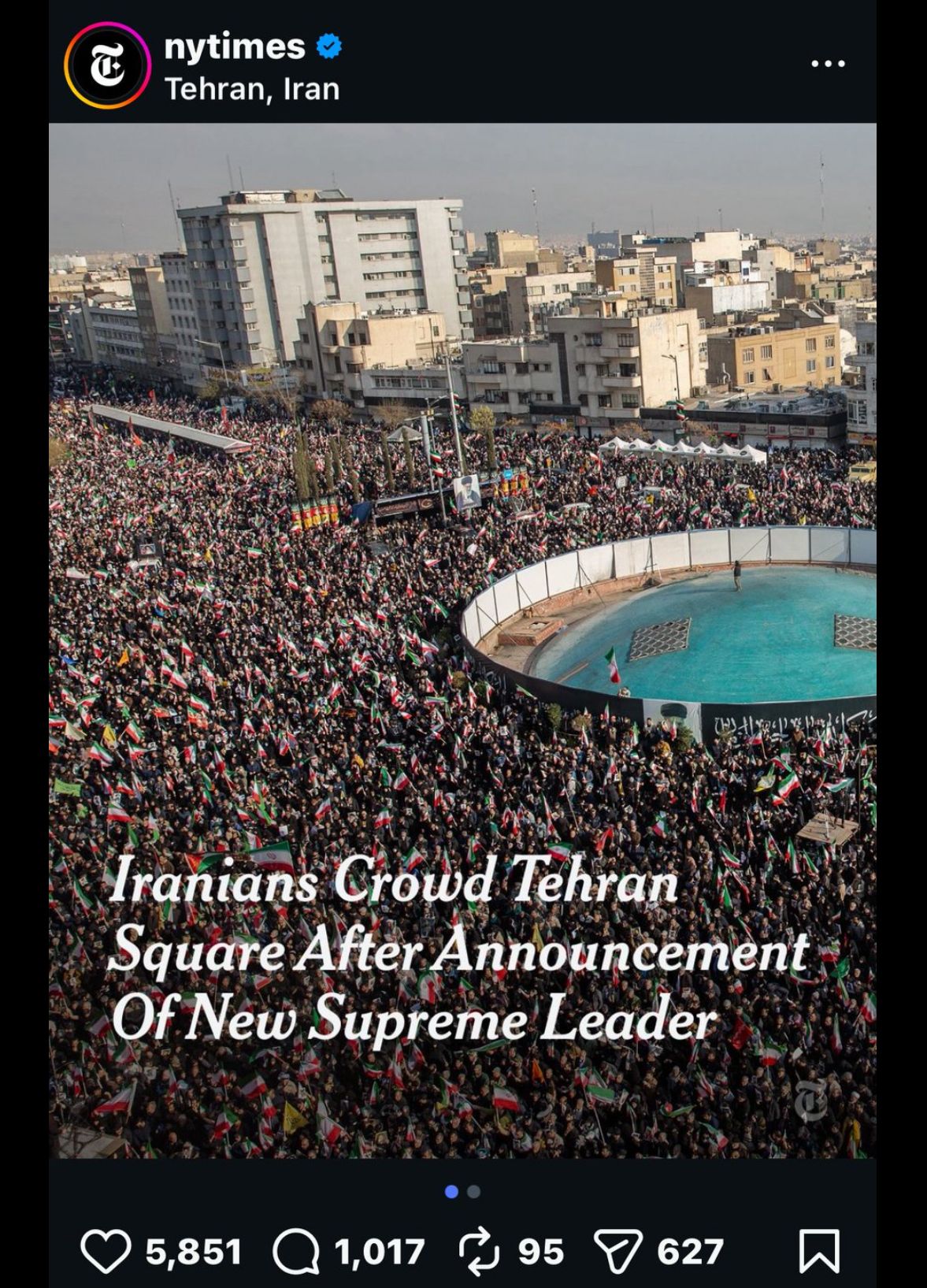

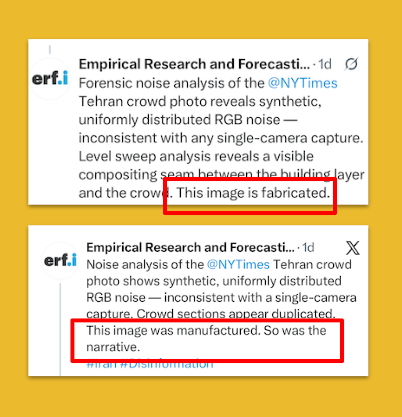

On Monday, amid the onslaught of AI-generated war footage circulating on social media, the X account for the “Empirical Research and Forecasting Institute” accused the New York Times of publishing a "manufactured" and “fabricated” photo of a crowd in Tehran.

This is a screenshot of the Instagram post that set off the ERFI:

The ERFI, which says it is cofounded by a PhD scientist based in Australia, posted an X thread that said the image “shows signs of digital manipulation” and was “manufactured” and “fabricated.”

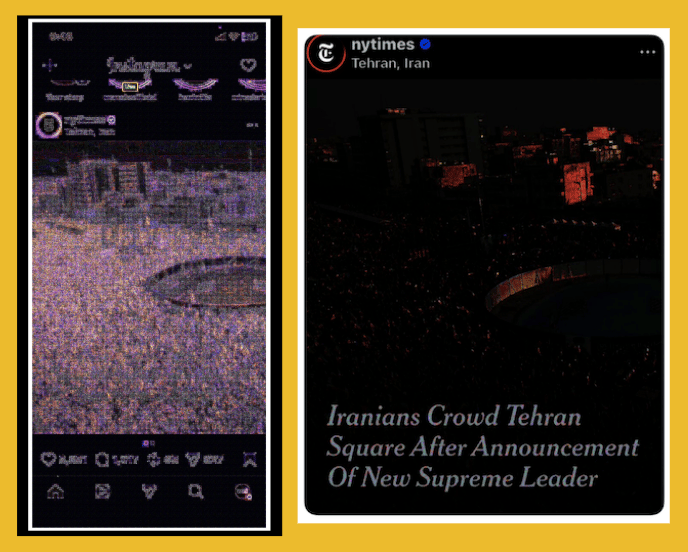

As evidence, it shared screenshots of forensic analyses it ran, including Error Level Analysis. Here are two of them. Can you spot the problem?

Incredibly, the ERFI performed at least some of its analysis using screenshots of the Instagram post. I don’t mean that they screenshotted or downloaded a low resolution version of the image from social media. No, they actually screenshotted an Instagram post and ran it through image forensics tools.

A golden rule of image forensics is that file size/quality is hugely important. A higher quality file equals better results. An image downloaded from social media is a poor test image. But a screenshot of an image and its surrounding social media platform elements? Basically useless.

The fact that the ERFI (which seems to only exist on X and on a since-deleted Google Sites page) used screenshots to run the analysis shows that it doesn’t understand how to use such tools, let alone interpret the results.

Unfortunately, ERFI used its flawed analysis to make false accusations, attracting over 600,000 views for the first tweet in its thread.

The New York Times replied to correctly point out that the analysis was “based on a re-posted version of the originally posted image, which misrepresents standard image compression behavior.”

ERFI subsequently deleted the tweets that said the image was manufactured/fabricated. And it tried to suggest it was just reminding the media of the importance of verification:

Yes, the Iranian regime has shared manipulated media. Yes you need to be vigilant. No, that doesn’t mean you can throw a screenshot of an Instagram post into an ELA tool, call it analysis, and accuse someone of publishing a fabricated photo.

I sent a Facebook message to Dr. Hooshang Lahooti, the Australia-based scientist who is publicly listed as the cofounder of ERFI, for comment. He didn’t reply. (I also emailed him at his University of Sydney email, but it bounced back.)

This is what happens when engagement incentives meet forensic cosplay. — Craig

📍 Aleksander K launched Honeyplot, “a simple, browser-based tool for mapping relationships between entities and individuals.” Think of it as a scaled-down version of the mapping you can do with Maltego. Also similar to Osinttracker.

📍 Sarah W wrote a helpful guide to vetting browser extensions for OSINT.

📍 Mark Fenton revamped the OSINT Canada site, which offers OSINT tools and also links to his Start.me page of OSINT resources in the country.

📍 Benjamin Strick wrote a new edition of his Field Notes OSINT newsletter. He also published a YouTube video, “How I Geolocated the Taliban in the Afghan Desert: Let's Geolocate #8.”

📍 Henk Van Ess wrote, “How I Taught AI to Catch Fakes I Can't See.” He also did an X thread about recent updates to Image Whisperer, a tool for detecting AI-generated images.

📍 Rae Baker wrote, “Freeports: Inside the World's Most Secretive Financial Infrastructure.”

📍 Dennis Keefe wrote, “OSINT Profiles: Analyzing the Small vs. Large Footprint.”

Events & Learning

📍 Henk Van Ess is giving a free webinar for the The Global Investigative Journalism Network on March 26, “Detecting AI-Generated Content: Updated Tools and Techniques.” Register here.

Reports & Research

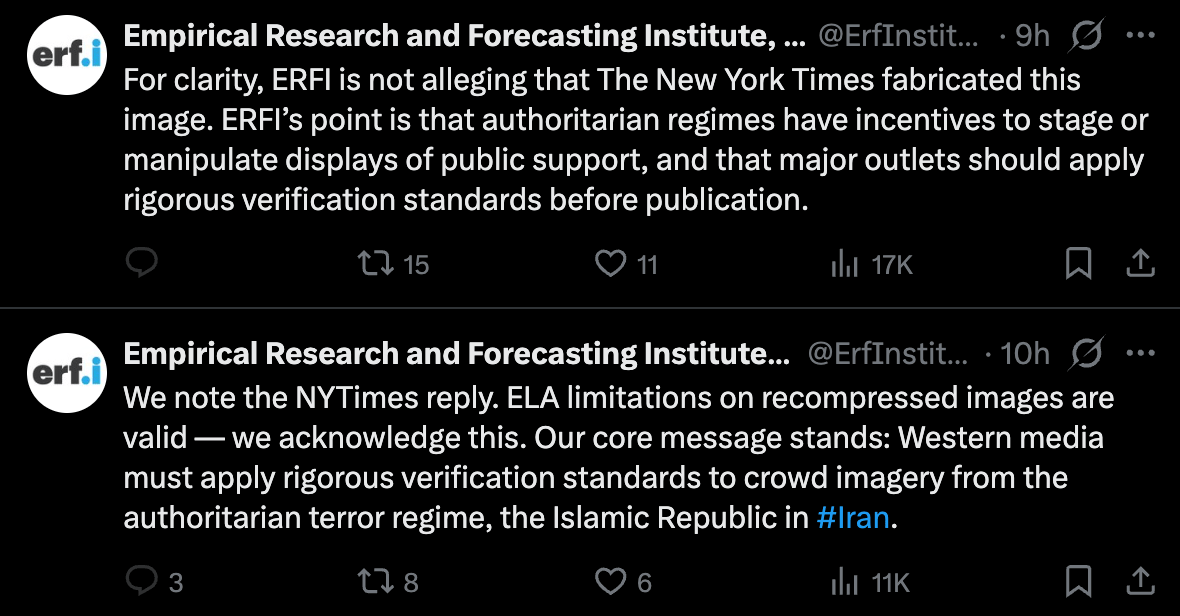

📍 In a new preprint, a group of communications researchers looked at how Google reverse image search performed on searches for viral manipulated media over a 15-day period in the summer of 2024. TL;DR: not great: "Google RIS returns a substantial volume of irrelevant information and repeated misinformation, whereas debunking content constitutes less than 30% of search results."

📍 ABC News published an immersive report into 20 Facebook pages — mostly based in Vietnam — that targeted Australian users with AI slop-supported political fake news. Worth a look.

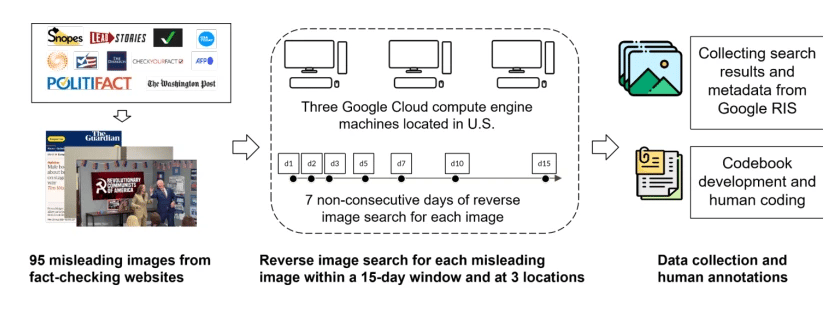

📍 This valuable preprint (h/t Thomas Renault) looked at Grok’s performance during four “misinformation events” in 2025. The author concluded that “the probability that Grok provides a correct response increases as fact-checks accumulate.” It’s the nth reminder that you can’t really do crowdsourced or AI-powered debunking if you’re also trying to dismantle the fact-checking industry.

This is of course particularly relevant during a week when Grok kept making stuff up about the US-Israel war on Iran, got corrected by X’s chief of product, and has apparently been deleting its mistakes.

📍 Claude performed best in an experiment that tested LLMs’ resistance to prompts geared towards committing academic fraud or publishing junk science. The newer versions of OpenAI’s models placed second. Grok and gpt-4o-mini did worst.

📍 Data & Society published a detailed policy brief, “Deepfake Financial Fraud: The Global Regulation of AI-Driven Scams.” It examines regulatory approaches around the world, identifies gaps, and makes recommendation for how governments, platforms, and other groups can tackle the scourge of deepfake scams. (Craig read early versions of the report and offered suggestions.)

📍 Graphika identified a network of synthetic dating profiles that were used in a romance scam. The report found that “actors use AI-generated media and text to boost the scale and quality of romance scams, as they migrate from impersonating celebrities and stealing profile images to creating richly detailed fake personas.”

Want more studies on digital deception? Paid subscribers get access to our Academic Library with 55 categorized and summarized studies:

One More Thing

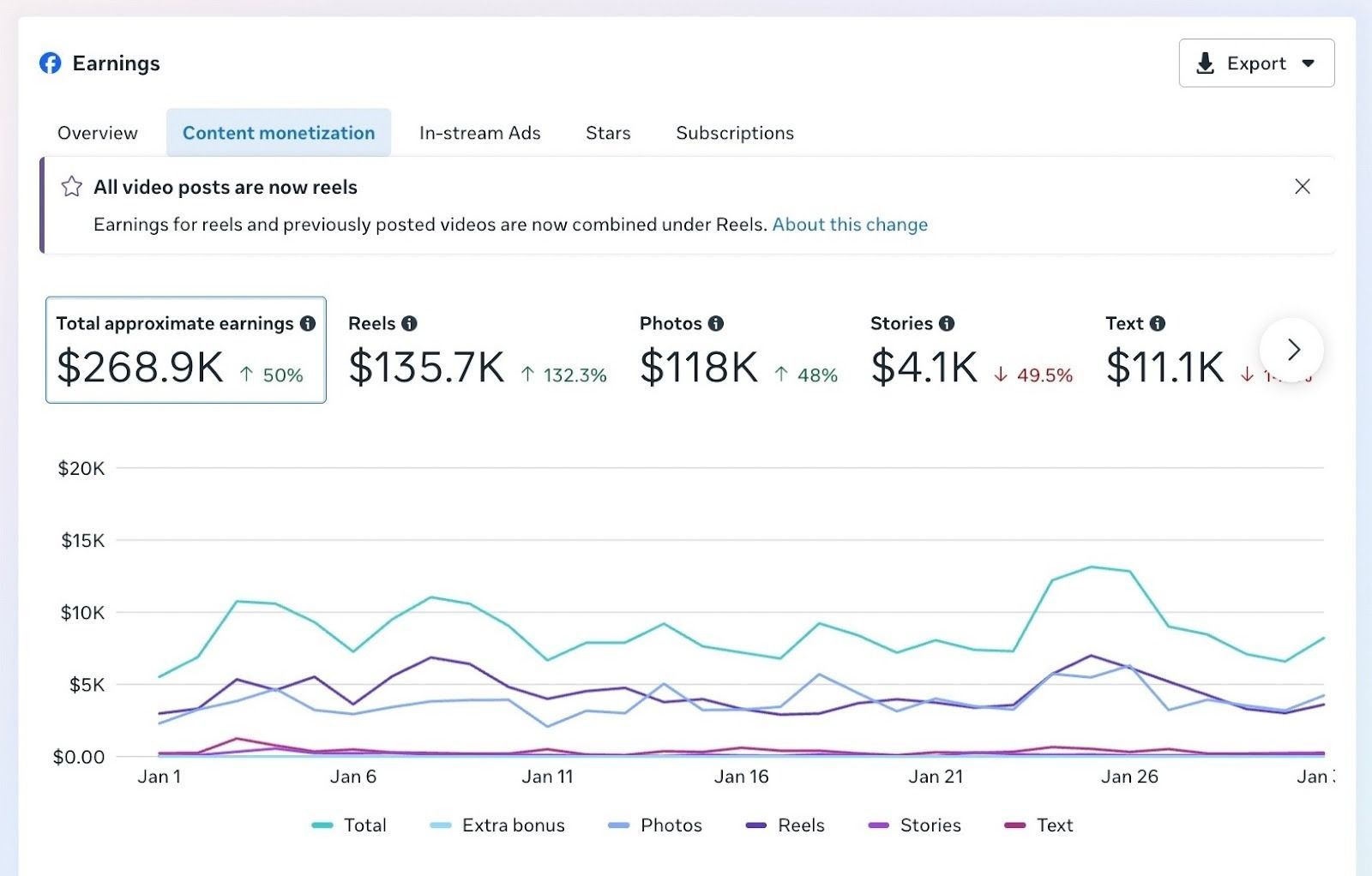

Kyle Tharp writes Chaotic Era, a great newsletter about politics in the digital age. He recently spoke to political influencers about how much money they’re earning from Meta’s content monetization program, which pays creators based on engagement.

Tharp shared a screenshot of a single month of earnings from one person:

Indicator is a reader-funded publication.

Please upgrade to access to all of our content, including our how-to guides and Academic Library, and to our live monthly workshops.